You Can Reset a Leaked Password. You Cannot Reset Your Face.

LinkedIn headshots are no longer just part of your professional brand. They are biometric assets that can be scraped, modeled, and reused against you.

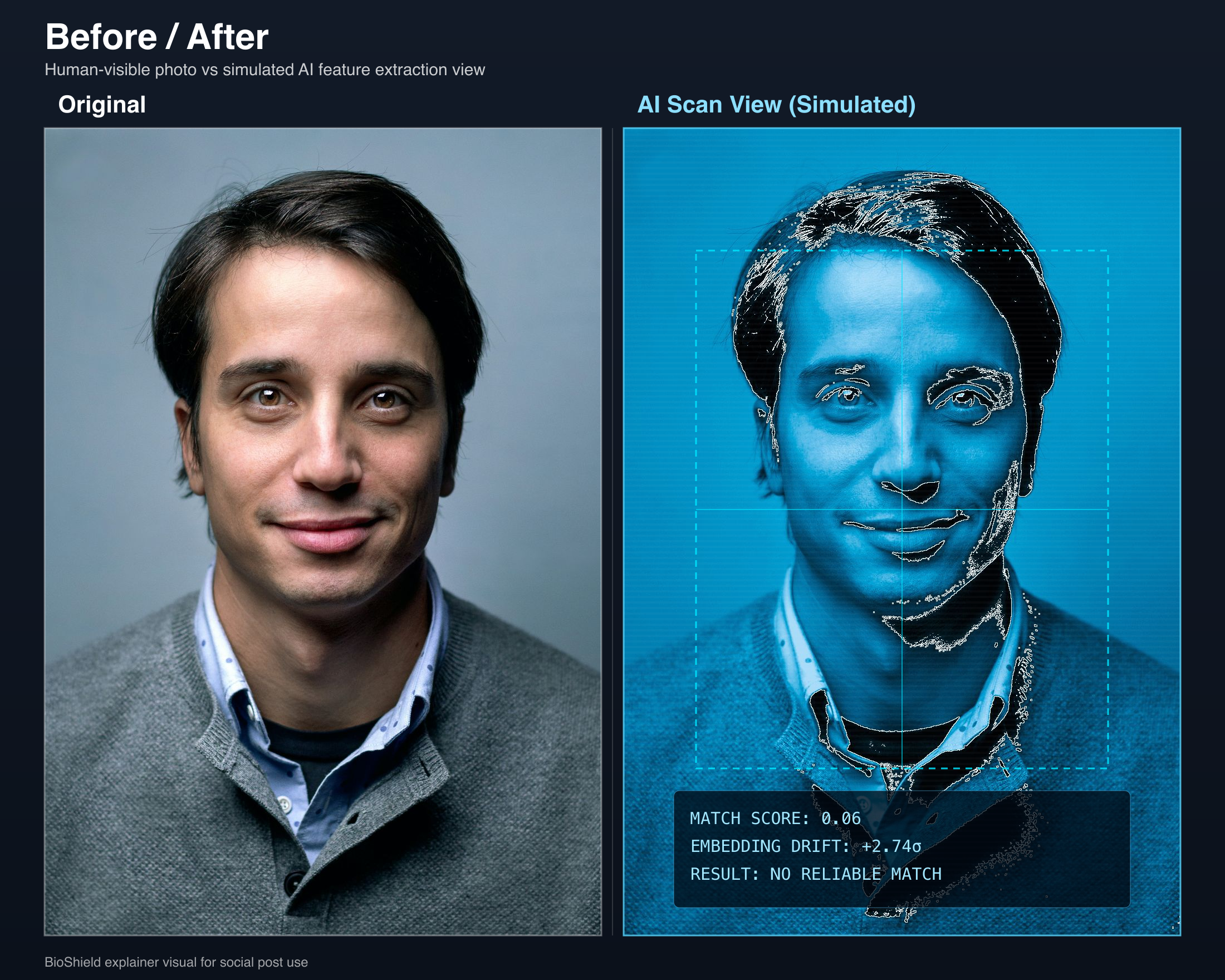

A profile photo can look normal to people while exposing a rich biometric signal to automated matching systems. That gap is the problem.

Most people still think about a leaked password and a public headshot as two very different problems. The password feels urgent because it is obviously security-related. The headshot feels harmless because it looks like branding.

That distinction is breaking down. Public profile photos are being scraped at scale, copied into datasets, and used to train or improve systems that identify, classify, and simulate real people. Your LinkedIn image is not just telling employers who you are. It is telling machines how to recognize you.

The Core Risk Is Permanence

If a password leaks, you rotate it. If a token leaks, you revoke it. But when a high-quality face image is scraped, there is no equivalent reset button. Once your biometric features are embedded into someone else's training set, watchlist, or matching pipeline, the problem becomes durable.

That permanence matters because facial data is multipurpose. The same headshot can help train recognition models, improve identity resolution, support impersonation workflows, or seed synthetic media. The image you uploaded for trust can become raw material for systems you never consented to.

Why This Is Bigger Than Fake Profiles

A scraped profile photo is not just a reputational nuisance. In practice, it can be reused in ways that have real security consequences.

- •Identity verification abuse: Attackers can animate or re-render your face to test the limits of remote verification and liveness checks.

- •Deepfake impersonation: A clean headshot is enough to bootstrap convincing synthetic video, voice-linked personas, and executive impersonation attempts on calls.

- •Fraud and access attacks: Reusable biometric data can support account takeover, social engineering, loan fraud, and attempts to access internal company systems.

The AI Safety Lesson Applies Here Too

Recent AI failures all point to the same pattern: optimization without context creates damage. Systems follow the signal they are given, not the human meaning around it. That is dangerous in operations, defense, and identity systems alike.

When your face becomes training data, you lose control over the context in which it is used. The model does not know whether the image came from a professional profile, a company bio, or a family photo. It only knows that your face improved its ability to detect, match, or synthesize a person.

That is why this issue should be framed as security, not aesthetics. A public headshot now behaves more like a reusable credential than a harmless avatar.

The Defensive Move Has To Happen Upstream

The goal is not to disappear from the internet. Most professionals cannot do that. The goal is to publish images that still work for people while degrading their value to unauthorized AI systems.

CloakBioGuard protects profile photos before they become useful training data. The image is cloaked so it remains usable in the real world but becomes significantly less reliable for facial-recognition and model-training workflows downstream. In other words, the defense happens before the scrape, not after the damage spreads.

That is the shift more people need to make. We already accept that passwords should be hardened before a breach. Profile photos deserve the same mindset.

Run a free scan on your current photo, then protect the version you actually publish to LinkedIn, company pages, and speaker bios.

cloakbioguard.com/scan